Caching is a powerful way to make systems faster, handle more traffic, and reduce delays. This guide explains caching in simple terms, from the basics to advanced strategies used in big systems like social media or online stores.

What is Caching?

Caching means saving frequently used data in a fast storage area called a cache. This lets the system grab data quickly instead of fetching it from slower sources like a database or API.

Without Cache:

- User → Server → Database (takes time) → Response

With Cache:

- User → Server → Cache (super fast) → Response

Why Use Caching?

Caching makes systems better in several ways:

- Speed: Gets data faster, reducing wait times.

- Scalability: Handles more users without overloading the database.

- Reliability: Manages sudden traffic spikes or failures.

- Cost Savings: Uses fewer resources for computing and data retrieval.

- Better User Experience: Makes apps and websites feel smooth and responsive.

Caching is essential for high-traffic platforms like social media, e-commerce sites, or video streaming services.

Types of Caching

Here are the main types of caching and where they’re used:

- Client-Side Caching

- Where: In the user’s browser or mobile app.

- Purpose: Speeds up repeat visits by avoiding network calls.

- Examples: Browser cache (stores images, CSS, JS), Service Workers, LocalStorage, or IndexedDB.

- Real-World Example: Facebook saves images and posts locally, so scrolling back is instant.

- Server-Side Caching

- Where: On the backend server.

- Purpose: Avoids reprocessing the same data for dynamic requests.

- Examples: Caching API responses or user sessions.

- Real-World Example: Reddit caches popular posts to avoid database queries.

- CDN Caching (Edge Caching)

- Where: On CDN servers close to users.

- Purpose: Delivers static content like images or videos quickly.

- Examples: Images, videos, CSS, JS files.

- Real-World Example: Netflix uses CDN caching to stream videos with low delay worldwide.

- Database Caching

- Where: Between the app and database.

- Purpose: Stores query results to reduce database work.

- Tools: Redis, Memcached.

- Real-World Example: Shopify caches product listings in Redis during sales.

- Application Caching

- Where: In the app layer (in-memory or distributed).

- Purpose: Saves computed data or API responses.

- Real-World Example: Twitter caches user timelines to avoid rebuilding feeds.

Cache Placement in System Design — Detailed Explanation

Caching can be used at different points in a system to improve speed and reduce load. Here’s how it works at each layer:

- Client-Side Cache

- Location: Browser or mobile app.

- Purpose: Reduces network calls and supports fast reloads or offline use.

- What’s Cached: Static assets (images, CSS, JS), API responses, offline data.

- Example: A shopping app saves product images and cart items locally. When you reopen the app, they load instantly without needing the internet.

- Benefits:

- Saves server and network resources.

- Makes the app feel faster and more responsive.

- Supports offline use (e.g., in Progressive Web Apps).

- CDN / Edge Cache

- Location: Servers close to users, part of a Content Delivery Network (CDN).

- Purpose: Delivers static content quickly, no matter where users are.

- What’s Cached: Images, videos, CSS, JS, some static API responses.

- Example: YouTube caches videos on CDN servers, so they load fast globally.

- Benefits:

- Faster load times for users.

- Reduces traffic to the main servers.

- Lowers global network delays.

- Load Balancer / API Gateway Cache

- Location: Between users and app servers.

- Purpose: Caches HTTP responses to avoid repeated backend requests.

- What’s Cached: Common API responses or page fragments.

- Example: NGINX caches the response for

/api/top-productsto skip app server calls. - Benefits:

- Reduces backend processing and database load.

- Handles sudden traffic spikes better.

- Increases system throughput.

- Application Server Cache

- Location: In or near the app server (in-memory or distributed).

- Purpose: Stores dynamic or computed data for quick access.

- What’s Cached: API responses, user sessions, business logic results.

- Example: An e-commerce site caches product prices, stock, and reviews for fast page loads.

- Benefits:

- Quick access to frequently used data.

- Fewer database queries for dynamic content.

- Supports scaling without overloading the database.

- Database Cache

- Location: Between the app server and database.

- Purpose: Saves database query results to lighten the database’s load.

- What’s Cached: Common query results or database objects.

- Example: Caching product category queries in Redis for quick retrieval.

- Benefits:

- Boosts database performance.

- Saves money by reducing expensive database operations.

- Prevents database slowdowns during traffic spikes.

Multi-Layer Caching — Best Practice

Using caching at multiple layers is the best way to build large systems. Each layer targets different types of data and problems:

| Layer | Cache Type | Example Tool | Purpose |

|---|---|---|---|

| Client | Browser/App Cache | Service Worker, LocalStorage | Fast local response |

| CDN | Edge Servers | Cloudflare, AWS CloudFront | Fast static content delivery |

| Load Balancer | Response Cache | NGINX, API Gateway | Reduce backend requests |

| Application | Data/Session Cache | Redis, Memcached | Speed up dynamic data access |

| Database | Query Cache | Redis, MySQL Query Cache | Avoid repeated database reads |

Real-World Example (E-commerce Platform):

- Client Cache: Stores cart items and user preferences.

- CDN Cache: Holds product images, CSS, and JS files.

- Load Balancer Cache: Saves popular API responses.

- Application Cache: Stores product details, prices, and reviews.

- Database Cache: Caches frequent product category queries.

Outcome:

- Pages load quickly.

- Database load is low.

- System handles many users smoothly.

Diagram Concept: Multi-Layer Cache Flow

Key Takeaway:

- Each layer solves a specific performance issue.

- Combining layers ensures fast responses, scalability, and cost savings.

Caching Strategies — Detailed Guide

Caching strategies decide how your app uses the cache and database. The right strategy depends on how often data is read or written, how consistent it needs to be, and speed goals. Below are the main strategies with their read/write flows:

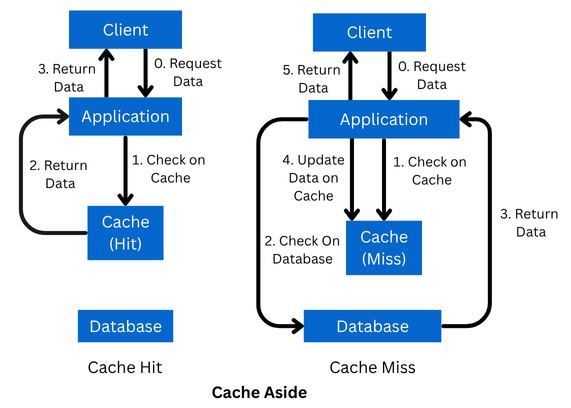

- Cache-Aside (Lazy Loading)

- Description: The app checks the cache first. If data isn’t there (cache miss), it fetches from the database and stores it in the cache.

- Read Flow:

- Check cache → Cache hit → Return data.

- Cache miss → Query database → Store in cache → Return data.

- Write Flow: Write directly to the database; cache updates on the next read.

- Pros: Simple, works well for read-heavy systems.

- Cons: Cache might be outdated if the database changes often.

- Diagram Concept:

- Use Case: APIs, product catalogs, or blog posts with rare updates.

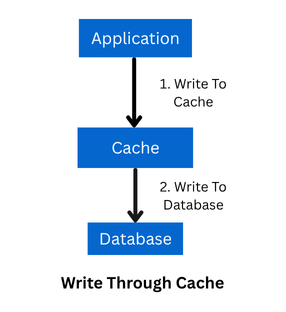

- Write-Through Cache

- Description: Every write updates both the cache and database at the same time.

- Read Flow:

Check cache → Hit → Return data;

Miss → Fetch from database → Update cache → Return. - Write Flow: Write to cache → Write to database.

- Pros: Cache stays consistent with the database.

- Cons: Writes are slower because they update two places.

- Diagram Concept:

- Use Case: Financial systems or user profiles needing strong consistency.

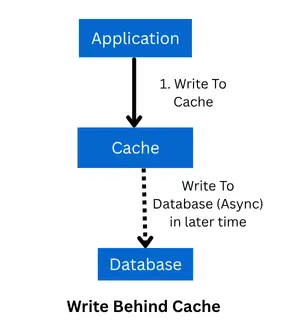

- Write-Behind (Write-Back) Cache

- Description: Write to cache first; database updates happen later in the background.

- Read Flow:

Check cache → Hit → Return;

Miss → Fetch database → Update cache → Return. - Write Flow: Write to cache → Update database later.

- Pros: Very fast writes.

- Cons: Risk of data loss if the cache crashes before updating the database.

- Diagram Concept:

- Use Case: Analytics, counters, or systems with heavy writes (e.g., likes or clicks).

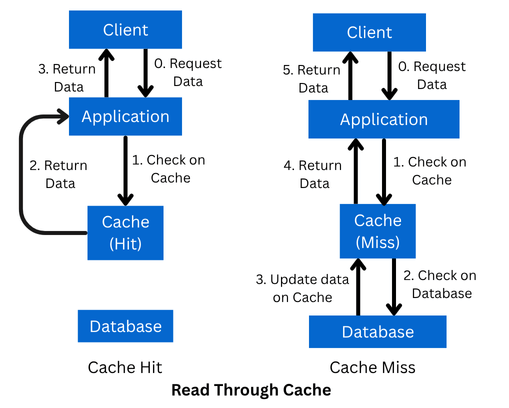

- Read-Through Cache

- Description: The app always reads from the cache. If data is missing, the cache fetches it from the database.

- Read Flow:

Cache → Hit → Return;

Miss → Cache loads from database → Return. - Write Flow: Usually writes to database; cache updates based on setup.

- Pros: Simplifies app code since it only talks to the cache.

- Cons: Cache needs to handle database fetching.

- Diagram Concept:

- Use Case: Redis-based systems or proxy caches.

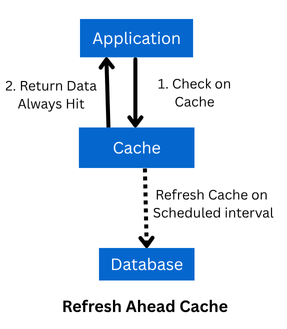

- Refresh-Ahead Cache

- Description: Cache refreshes data before it expires to avoid misses during busy times.

- Read Flow: Always read from cache → Usually a hit.

- Write Flow: Write to cache and database.

- Pros: Prevents cache misses, keeps latency low.

- Cons: More complex; needs scheduled refreshes.

- Diagram Concept:

- Use Case: Recommendation systems, trending content, or dashboards.

Comparison of Caching Strategies

| Strategy | Read Speed | Write Speed | Consistency | Use Case |

|---|---|---|---|---|

| Cache-Aside | Fast | Medium | Eventual | APIs, blogs, product catalogs |

| Write-Through | Fast | Slow | Strong | Financial apps, user profiles |

| Write-Behind | Fast | Very Fast | Eventual | Counters, analytics, high writes |

| Read-Through | Fast | Medium | Strong | Redis proxy, caching libraries |

| Refresh-Ahead | Very Fast | Medium | Strong | Dashboards, trending content |

Cache Expiration (TTL)

TTL (Time-To-Live) decides how long data stays in the cache:

- Short TTL (seconds/minutes): For real-time data like prices or stock levels.

- Long TTL (hours/days): For static or rarely changing data like user profiles or settings.

Cache Invalidation Strategies

To keep the cache fresh, use these methods:

- Time-based Expiration: Data expires after a set time (TTL).

- Write Invalidation: Update or remove cache when the database changes.

- Manual Invalidation: Clear cache manually (e.g., by an admin or event).

Tools & Technologies

| Purpose | Tools |

|---|---|

| In-Memory Cache | Redis, Memcached |

| Distributed Cache | Hazelcast, Couchbase |

| CDN / Edge Caching | Cloudflare, Akamai, AWS CloudFront |

| Browser Cache | HTTP headers, Service Workers |

Advanced Caching Concepts

- Cache Stampede: When many requests try to fill an expired cache at once. Solutions: Use locking, combine requests, or add TTL jitter.

- Cache Warming: Load cache with data during startup or deployment.

- Two-Level Cache: Combine local memory cache with a distributed cache (e.g., App → Local Cache → Redis → Database).

Real-World Example: E-commerce Product Page

| Layer | Cached Data |

|---|---|

| Client | Cart items, user preferences |

| CDN | Images, CSS, JS |

| Load Balancer | Popular API responses |

| Application | Product details, pricing, reviews |

| Database | Query results for categories |

Result: Fast page loads, low database load, and high scalability.

Final Thoughts

Caching isn’t just about speed—it’s about building systems that can handle millions of users. To make caching work well:

- Place caches at the right layers.

- Set proper TTL and invalidation rules.

- Use advanced techniques like cache warming or two-level caching.

When done right, caching can cut response times from seconds to milliseconds and support massive traffic.

If you’d like, I can create professional diagrams for each caching strategy’s read/write flow to make this guide ready for publishing. Would you like me to generate those diagrams next?

Loading comments...